Presented at IEEE Engineering in Medicine and Biology 2019 Conference in Berlin, Germany (July 2019)

Abstract: Visual examination forms an integral part of cervical cancer screening. With the recent rise in smartphonebased health technologies, capturing cervical images using a smartphone camera for telemedicine and automated screening is gaining popularity. However, such images are highly prone to image corruption, typically out-of-focus target or camera shake blur.

Abstract: Visual examination forms an integral part of cervical cancer screening. With the recent rise in smartphonebased health technologies, capturing cervical images using a smartphone camera for telemedicine and automated screening is gaining popularity. However, such images are highly prone to image corruption, typically out-of-focus target or camera shake blur.

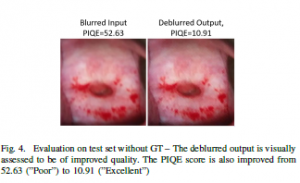

In this paper, we applied a generative adversarial network (GAN) to deblur mobile-phone cervical (MC) images, and we evaluate the deblur quality using various measures. Our evaluation process is three-fold: first, we calculate the peak signal to noise ratio (PSNR) and the structural similarity (SSIM) of a test dataset with ground truth availability.

Next, we calculate the perception based image quality evaluator (PIQE) score of a test dataset without ground truth availability. Finally, we classify a dataset of blurred and the corresponding deblurred images into normal/abnormal MC images. The resulting change in classification accuracy was our final assessment.

Our evaluation experiments show that deblurring of MC images can potentially improve the accuracy of both manual and automated cancerous lesion screening.